Tech Revolt spoke with leading experts across marketing, cybersecurity, and communications to explore the rise of AI-generated human videos. This in-depth feature examines the opportunities, risks, and ethical challenges shaping digital trust in today’s rapidly evolving media landscape.

By Kasun Illankoon, Editor in Chief at Tech Revolt

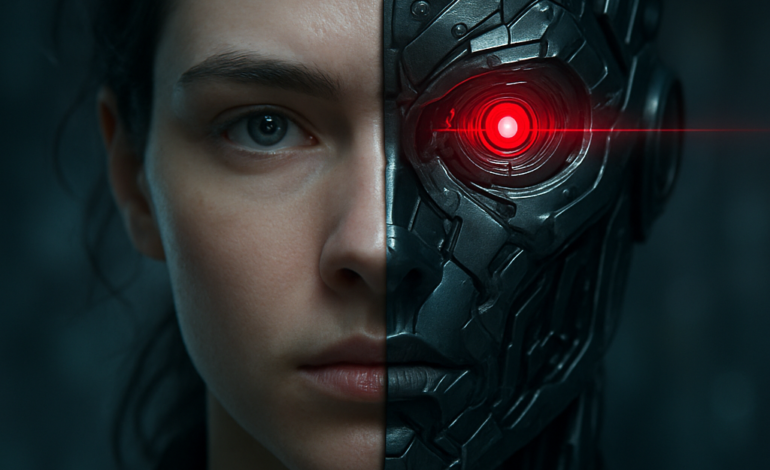

In an age where artificial intelligence continues to reshape how we communicate, the emergence of AI-generated human videos stands as one of the most transformative — and contested — technologies of our time. These synthetic videos, featuring lifelike digital avatars that mimic human expressions, voices, and mannerisms, are beginning to revolutionise marketing, corporate storytelling, telehealth, and security. Yet, alongside the opportunities they unlock lies a mounting battle: safeguarding digital trust in a world where seeing is no longer believing.

Across the Middle East and beyond, businesses and institutions are grappling with how to harness the creative potential of AI-generated human videos while mitigating the risks posed by misuse, misinformation, and identity threats. The voices of industry experts and leaders reveal a complex landscape of innovation, ethical dilemmas, and urgent calls for proactive governance.

Unlocking New Frontiers in Engagement

“AI-generated avatars can add a dynamic layer to customer engagement, particularly for on-demand content, multilingual support, or hyper-personalised messaging,” says Adam Taylor, Director of Marketing at IFZA. His observations capture the growing enthusiasm among brands to leverage this technology for scalable, cost-effective storytelling.

Taylor explains how AI allows for rapid production of content that can be customised across languages and styles, helping businesses meet the demands of increasingly segmented markets. “We see a future where marketers shift from ‘mass messaging’ to ‘mass intimacy’ — personalised communication at scale, enabled by AI.”

Similarly, Alex Jena, Chief Strategy Officer at dentsu MENAT, emphasises that the true creative advantage lies not in merely producing synthetic content but in preserving authenticity. “The opportunity isn’t just to personalise at scale — it’s to do it in a way that still feels real, still connects, and still respects the intelligence of the audience.”

Yet, both experts caution against complacency. “Personalised doesn’t necessarily mean personal,” Jena warns. Synthetic videos, no matter how polished, can risk feeling “templated” or “synthetic,” especially in cultures where emotion and nuance are paramount.

Ethical Boundaries and Trust in Healthcare

In healthcare, the stakes for trust and transparency are even higher. Rania Roxana Akkela, Group Marketing Director at Medcare Hospitals and Medical Centres, outlines the promise and perils of AI avatars in patient communication.

“Telehealth can assist healthcare professionals in using resources more efficiently,” she says, highlighting how AI-generated avatars can streamline prescription refills and chronic case management. However, Akkela is clear that patients must understand these avatars are not human — a distinction essential to maintaining trust.

“Healthcare is all about trust and transparency. Patients should not feel deceived,” she stresses. Safeguards such as disclaimers within avatar interactions and rigorous vetting by medical professionals are vital to uphold ethical standards in AI deployment.

The Growing Threat of Deepfakes

While AI-generated human videos offer exciting possibilities, they also open Pandora’s box of risks — most notably deepfakes, hyper-realistic synthetic videos used maliciously. Haider Pasha, Chief Security Officer at Palo Alto Networks, describes the sophistication of these threats.

“Attackers are using AI-generated lip-syncing, face-swapping, and voice cloning to launch smarter, more dangerous attacks,” he says, citing instances of cybercriminals impersonating executives to steal funds or sensitive data. “Deepfake videos can impersonate individuals, enabling social engineering attacks that traditional security tools may not catch.”

The challenge is compounded by the rapid advancement of AI technology, which outpaces many organisations’ ability to detect and respond effectively. Santiago Pontiroli, Lead TRU Researcher at Acronis, notes the industrialisation of these tactics. “Criminals aren’t just experimenting; they’re actively using generative AI to craft tailored attacks that bypass traditional defences.”

Fortifying the Defences

Experts agree that combating the misuse of AI-generated videos demands a multi-layered, proactive approach. Ezzeldin Hussein, Regional Senior Director at SentinelOne, advocates for combining advanced technological safeguards with clear organisational policies.

“Using sophisticated machine-learning tools helps identify subtle inconsistencies or digital manipulation signatures,” Hussein explains. “Implementing digital watermarking and content provenance standards enables traceability and authenticity verification.”

Employee training is another crucial pillar. Haider Pasha highlights the importance of awareness: “Helping teams understand how to spot and report AI-generated video threats is critical.” Organisations must also strengthen access controls and authentication methods to reduce vulnerabilities.

The Role of Brands in Protecting Digital Trust

Brand risk and reputation consultants stress the urgency for companies to set guardrails before crises occur. Gerry McCusker, Managing Director of The Drill, points out that many brands remain ill-prepared for AI-driven reputation challenges.

“Brands need to stop being dazzled by AI’s sparkle and assess its threat potential just as earnestly,” he warns. Clear AI policies guiding the ethical use of synthetic video content are essential, alongside robust crisis communication plans to manage any fallout.

Adam Taylor from IFZA echoes this sentiment, underlining the necessity for internal protocols. “Any adoption of such tools requires this as a minimum to preserve trust and credibility.”

Navigating Cultural Nuance in a Synthetic Age

The Middle East’s rich tapestry of cultures, languages, and traditions adds another layer of complexity to the adoption of AI-generated human videos. Alex Jena of dentsu MENAT underscores this point, noting that cultural and emotional resonance is crucial for brands in the region.

“In a region where culture, emotion, and context carry real weight, the risk of synthetic content feeling hollow or unconvincing is amplified,” Jena explains. This calls for brands to not only personalise content at scale but to ensure it “still feels real” and connects authentically with diverse audiences.

Adam Taylor of IFZA adds that this is where human oversight becomes indispensable. “While AI-generated avatars offer scalability and speed, they should enhance, not replace, genuine human connection — especially in cultures that value personal relationships and trust.”

The Fine Line Between Innovation and Risk

As with any rapidly evolving technology, the excitement around AI-generated human videos is tempered by the potential for misuse. Rob T Lee, Chief of Research at the SANS Institute, highlights the emerging threat posed by nation-state actors.

“Highly accurate deepfakes are already being weaponised,” Lee warns. He references North Korean groups using synthetic media to drain cryptocurrency wallets and conduct espionage, illustrating how sophisticated and covert these attacks have become.

This underscores the urgent need for organisations to rethink their cybersecurity frameworks. Santiago Pontiroli of Acronis stresses that technology alone isn’t enough.

“Detection tools are improving, but organisations must also invest in training and awareness,” Pontiroli says. “Security should be embedded across departments — not just IT — because social engineering via synthetic videos targets human behaviour as much as technical vulnerabilities.”

Healthcare on the Frontlines of Trust

Within healthcare, where misinformation can have life-or-death consequences, the rise of AI-generated human videos presents a uniquely delicate challenge.

Rania Roxana Akkela of Medcare Hospitals articulates the stakes: “Synthetic videos can undermine patient trust, especially if fake testimonials or misleading information circulate.” To counter this, Medcare has introduced stringent monitoring and watermarking of video content to assure authenticity.

The healthcare sector’s cautious yet innovative approach to AI avatars — used for appointment reminders or wellness advice — balances efficiency with ethical responsibility. “Patients need to know when they’re interacting with AI,” Akkela insists, “and those interactions must be transparent and medically vetted.”

The Role of Surveillance and Security

While marketing and healthcare focus on engagement and trust, the physical security sector confronts AI-generated videos from a technical perspective. Tertius Wolfaardt of Axis Communications shares how their approach integrates AI carefully to enhance surveillance without sacrificing human judgement.

“We view AI as a tool to improve situational awareness and incident response — not a replacement for human operators,” Wolfaardt explains. Axis’s Edge Vault technology, which cryptographically signs video streams, safeguards against tampering and enhances the integrity of surveillance footage.

“The challenge is ensuring the security and resilience of these systems as AI-generated content becomes more sophisticated,” he adds. Axis is investing heavily in hardware and software solutions to maintain trust in video evidence and surveillance data.

Rising to the Challenge: Industry Preparedness and Proactivity

Across sectors, a common theme emerges: businesses are currently playing catch-up in the face of AI-generated video risks.

Ezzeldin Hussein of SentinelOne states plainly, “Most organisations are underprepared, relying on outdated detection methods that struggle against evolving AI capabilities.”

He stresses the importance of proactive measures, including multi-layered technological defences, digital watermarking, biometric verification, and real-time monitoring. “Waiting to respond after a crisis hits is no longer an option.”

Similarly, Gerry McCusker of The Drill advocates for preemptive crisis communications planning. “Proactive preparation today can help avoid far more serious consequences tomorrow,” he notes.

Regulation and the Road Ahead

With rapid AI advances, regulators worldwide are beginning to act, though gaps remain.

Santiago Pontiroli highlights regional disparities: “The EU’s AI Act and China’s content labelling rules set important precedents for transparency in synthetic media. Yet many countries, including within the Middle East, have yet to develop comprehensive policies.”

He suggests that beyond laws, industry standards like DeepMind’s SynthID and the C2PA authenticity framework offer technical tools to bolster trust. Yet technology is only one part of the equation.

“Digital literacy campaigns, stakeholder education, and cultural adaptation will be critical for long-term resilience,” Pontiroli concludes.

Shaping a Trusted Future

The rise of AI-generated human videos is undeniably reshaping communication landscapes across the Middle East and globally. The technology offers tremendous promise for personalised storytelling, efficient customer engagement, and new healthcare models. But the risks to digital trust — from deepfakes and misinformation to brand dilution and ethical breaches — demand a balanced, vigilant approach.

As Adam Taylor of IFZA puts it, “The future of AI videos is bright, but only if brands invest in quality control, transparency, and human oversight.”

Alex Jena of dentsu MENAT echoes this call for intention and authenticity: “The creative edge won’t come from AI alone — it will come from brands that know themselves deeply enough to use AI as a powerful extension of their identity.”

In an era where “seeing is believing” no longer holds true, businesses must champion digital trust as their most valuable currency. From advanced technology safeguards to ethical policies and ongoing education, the battle for trust will shape the trajectory of AI-generated human videos for years to come.

Empowering Organisations to Build Digital Trust

Building digital trust in the era of AI-generated human videos requires more than technical fixes; it demands a cultural shift within organisations. As Gerry McCusker from The Drill highlights, “Risk diligence — the duties, processes, and plans that help distinguish fact from fiction during any crisis — is the foundation for resilience.”

Companies must embed these principles across departments — from marketing and communications to cybersecurity and human resources. Only by fostering a shared understanding of AI’s capabilities and risks can businesses prepare to respond swiftly and confidently when synthetic media challenges emerge.

The Imperative of Transparency and Accountability

Transparency is key to maintaining public confidence. As Medcare’s Rania Akkela stresses, “Patients should be made well aware when interacting with AI avatars and understand their limitations.” This honesty builds trust rather than erodes it.

Brands should adopt clear disclosure policies for AI-generated content, ensuring audiences can differentiate between synthetic and human-generated media. Digital watermarking and provenance tracking offer vital technical support, but the human element — honest communication and ethical use — will ultimately determine success.

Investing in Human Expertise and Ethical Innovation

While AI enables remarkable efficiencies, it cannot replace human creativity and ethical judgement. Alex Jena of dentsu MENAT warns against “flattening brand identity” by relying on generic off-the-shelf avatars. Instead, brands must “train their own models, embedding tone, values, and voice” to retain distinctiveness and emotional resonance.

Human input throughout the creative process — from prompt engineering to quality control — will ensure AI-generated videos remain authentic and engaging. As IFZA’s Adam Taylor puts it, “AI-generated avatars should enhance, not replace, real human connection.”

Heightened Security Vigilance: A Critical Layer

Haider Pasha, Chief Security Officer for EMEA & LATAM at Palo Alto Networks, sounds a clear warning about the evolving threat landscape: “Deepfake videos can impersonate individuals, enabling social engineering attacks that traditional security tools may not catch.” He urges organisations to scrutinise visual content with the same rigour as suspicious emails or links, combining technical controls with employee awareness to defend against these sophisticated identity threats.

Collaborative Efforts and Industry Standards

Addressing the challenges of AI-generated synthetic videos requires collaborative action. Governments, industry bodies, and tech companies must work together to develop robust regulations, share best practices, and invest in detection technologies.

Emerging frameworks such as the EU AI Act and content labelling rules provide valuable templates, but widespread adoption and enforcement are necessary to combat misinformation effectively.

Moreover, industry players like Axis Communications and SentinelOne demonstrate the importance of innovation in security infrastructure, combining hardware and software solutions to protect the integrity of video content.

A Call to Action for the Future

The technology behind AI-generated human videos is advancing at a breathtaking pace, offering unprecedented opportunities for storytelling, engagement, and efficiency. Yet with great power comes great responsibility.

Businesses in the Middle East and worldwide must rise to the challenge of balancing innovation with integrity. By investing in advanced safeguards, fostering transparency, cultivating human creativity, and preparing for emerging threats, organisations can safeguard digital trust — the cornerstone of meaningful connections in the digital age.

As we step into this new frontier, the question is not whether AI-generated human videos will reshape our communication landscape, but whether we will shape the technology in ways that honour authenticity, protect trust, and empower humanity.